United States : Faced with the impracticality of controlling the rapid evolution and widespread student access to generative Artificial Intelligence (AI) tools, K-12 school districts worldwide are rapidly shifting their official stance from outright AI bans to implementing flexible AI “guardrails” and usage guidelines. This policy pivot is driven by the reality that hard bans are unenforceable, pedagogically counterproductive, and quickly become obsolete.

This emergent policy framework centers on ethical usage, critical AI literacy, and pedagogical alignment rather than prohibition:

- Ethical Usage & Integrity (Assessment): Policies are moving toward requiring students to cite AI usage explicitly and submit their work with a process-focused defense, rather than just a final product. This shifts the focus of assessment from the output itself to the student’s ability to critically analyze and refine AI-generated content.

- Privacy and Security: Guardrails mandate strict adherence to student Privacy & Security regulations (like FERPA in the US), requiring teachers to vet third-party AI tools and prohibiting students from inputting personally identifiable information into public generative models.

- Digital Literacy: The most significant shift is classifying AI tools as a new form of Digital Literacy. Instead of fearing that AI will destroy academic skills, the policy encourages teachers to use AI to foster Future Skills like prompt engineering, source verification, and evaluating algorithmic bias.

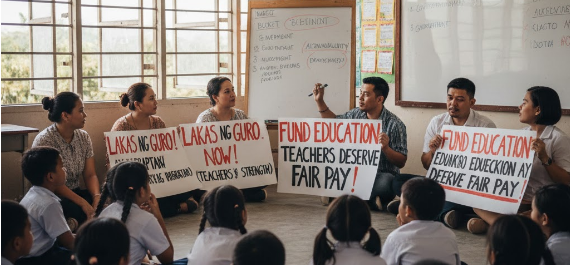

This approach reflects a growing consensus among school leaders and Teacher Preparation programs that the long-term goal is not to eliminate AI, but to teach Workforce Readiness by preparing students to work with these powerful tools responsibly and ethically. The guidelines function as living documents, designed to be easily updated as the technology—and the curriculum—evolves.